The pilot prototype of this use case consists of 3 components in order to provide a collaborative VR training module for mobile HMD users which exploits the ACCORDION platform. Τhe OVR application is redesigned to enable the migration of certain application components in the ACCORDION minicloud. For offloading the “full” computation offloading option is favored, where the whole computing part of the application is offloaded leaving the mobile device only responsible for input/output. This approach is preferable against task offloading, namely offloading a specific task, such as rendering as the modules related to rendering are rarely independent.

The HMD is responsible for sending rotation/translation and events as well as performing the decoding of the rendered image before being displayed on the HMD. The computation node in the edge minicloud, also characterized as Local Service (LS) supports all related-processing from the MAGES SDK and the Unity game engine, also storing the VR scene, data assets and avatars. Multiple instances of LS can be instantiated on the same node to support streaming to multiple HMDs. Aiming also to exploit the benefit of relay severs to support multicasting, dynamic host migration and game state continuity when multiple users collaborate under the same session in the same virtual environment, the ACCORDION platform selects the appropriate resource to deploy the service based on the user’s locations footprints. Additional third-party software is exploited to support the re-design of the application prototype. These are:

Photon: Cross-platform networking solution used in the application, utilizing a mix of common low-latency network communication methods (peer-to-peer with mandatory relay server connection) and providing the required integration in the Unity Engine.

WebRTC: Cross-platform framework that enables real-time communication (RTC) for mobile devices and browsers. Establishment of the communication of the participants is left up to the user of the framework, via a signaling server. The implementation of the WebRTC protocol by the Unity team is exploited that supports efficient streaming of rendition results as video playback to recipients, as well as audio processing results and input (via a custom data stream).

Two indicative scenarios are presented below:

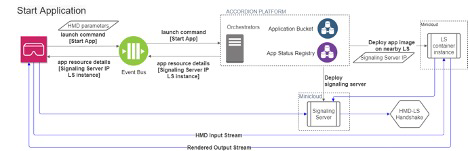

Start application, where the user starts the application from the HMD, it communicates to the event bus the launch command to the ACCORDION platform and the application image is deployed on the Local Service (LS). The application exploits the open-source cross-platform Render Streaming Unity package for streaming between end-points, using WebRTC. This protocol relies on a signaling solution involving a third-party server in addition to the two peers trying to connect to each other.

Upon request from the HMD to launch the application, an instance of the LS part and the signaling server are created, managed by ACCORDION. A connection is then established with the LS part and the user application on the HMD, via a signaling server. This connection may use 5G, which enables high-speed communication between the two endpoints.

The application can then stream video output (of the actual application view) to the headset, while receiving the user’s input stream itself (controller positions, button presses, etc.), using the WebRTC protocol. This enables low-end VR headsets to execute a high-quality experience.

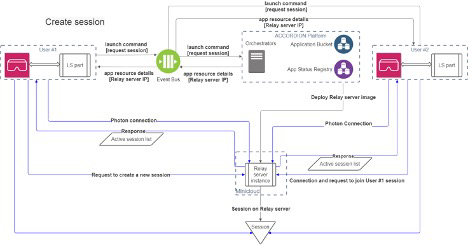

Create session, the user can either play the operation by themselves or collaborate with others; for the latter, the user accesses the interface for session list on the HMD and requests through the ACCORDION platform the Relay server address. The ACCORDION takes care of the necessary deployment steps of the Photon relay server instance on the available minicloud resource selected by the platform. Information is sent back to the LS and when connection is directly established with the relay server instance the user retrieves the list of active sessions. The user can join any session hosted on that server (by other users), or host a new session themselves.

This prototype is built on top of the MAGES SDK, which provides a set of commonly required functionalities and features, like high-quality interpolation, and Co-Op compatible VR interaction system for creating medical training modules supporting multiple VR HMDs (Vive, Oculus, etc.).

Author: Maria Pateraki | OVR and Michael Dodis | OVR